Quick Links

On Wednesday, December 6th, 2023, Google unveiled its powerful new AI model, Gemini, to the public.

It’s Google’s largest, most powerful, and most capable AI model as of yet – and it boasts extremely impressive multimodal capabilities.

The AI-powered LLM (large language model) is Google’s answer to OpenAI’s line of GPT models, the most recent being GPT-4.

In particular, the release of ChatGPT caught Google with its metaphorical pants down, as the company was taken completely by surprise by the chatbot’s advanced capabilities.

They’ve been in ‘code red’ mode ever since, crunching long hours to release an AI language model that’s superior to OpenAI’s offerings.

Now that Gemini is finally here, they may have done just that – as Google’s model can do just about anything – and you can use a combination of audio, text, image, and video prompts to communicate with it.

Check out this jaw-dropping video demo to see what we mean.

As you can see, Gemini is extremely smart, and it’s set to change the way users interact with AI bots.

From crisp image generation based on audio prompts to learning how to pronounce words in Mandarin correctly, Gemini’s uses are virtually endless.

Read on to learn more about Gemini’s exciting capabilities, as well as how you can use it to enhance your SEO and content creation.

What’s So Special About Google Gemini?

Gemini was built from the ground up to be natively multimodal, as it can flawlessly understand text, images, video, and audio prompts (and a mix of them all together).

Other so-called ‘multimodal’ AI tools use separate models that they train to understand images, audio, and video.

For example, OpenAI’s GPT-4 can only understand text prompts. For visuals and audio, they developed and trained separate models (DALL-E and Whisper, respectively).

Gemini is different, as Google’s team developed a singular multisensory model from day one – enabling proper multimodal understanding.

It’s the brainchild of Google and Alphabet, Google’s parent company. Google subsidiary DeepMind, an AI-based research lab, also contributed heavily to Gemini’s development.

The model isn’t short on smarts, as it can complete complex math and physics equations. It’s also a master programmer, as it can generate high-quality code in various programming languages, and it can identify and fix coding errors.

Gemini is multilingual, and its multimodal nature makes it particularly effective in this area.

You can ask Gemini to translate other languages, confirm how to pronounce specific words, and make sense of international media (one of Gemini’s demos shows it summarizing a podcast spoken in another language).

In other words, Gemini is a leap forward in AI technology, and Google is certainly excited about it. They’ve even dubbed the current age as ‘The Gemini Era,’ which certainly shows their supreme confidence in their new large language model.

It’s Google’s hope that the world uses Gemini to enhance human knowledge, creativity, and productivity, but only time will tell if this turns out to be true.

How Does Gemini Stack Up to GPT-4?

The release of ChatGPT in November 2022 kicked off the AI Wars, and they’ve been waging fiercely ever since.

OpenAI shocked the world and admittedly caught Google off guard with the release of its flagship chatbot.

In the months following its release, every tech company – from Amazon to Microsoft – was eager to throw their hat into the ring.

Here we are only a year later, and the AI landscape looks drastically different.

Microsoft partnered with OpenAI, using GPT-4 to power the ‘new’ Bing, which features the AI-powered chatbot Copilot. It’s capable of answering user’s questions, generating images, creating original content, and more.

Amazon hit the ground running with Lex, their AI chatbot, and they’re also planning to use generative AI to enhance their online shopping and Alexa, their virtual assistant.

Even social media companies couldn’t resist the AI craze, as Snapchat released its My AI chatbot to its users in February 2023.

While these developments were happening, Google was biding its time in the background, putting the final touches and tweaks on Gemini, their secret weapon for taking over the tech world again.

Now that it’s finally here, how does it stack up? Does Google Gemini make GPT-4 look like Microsoft Sam, or does OpenAI’s LLM still hold up?

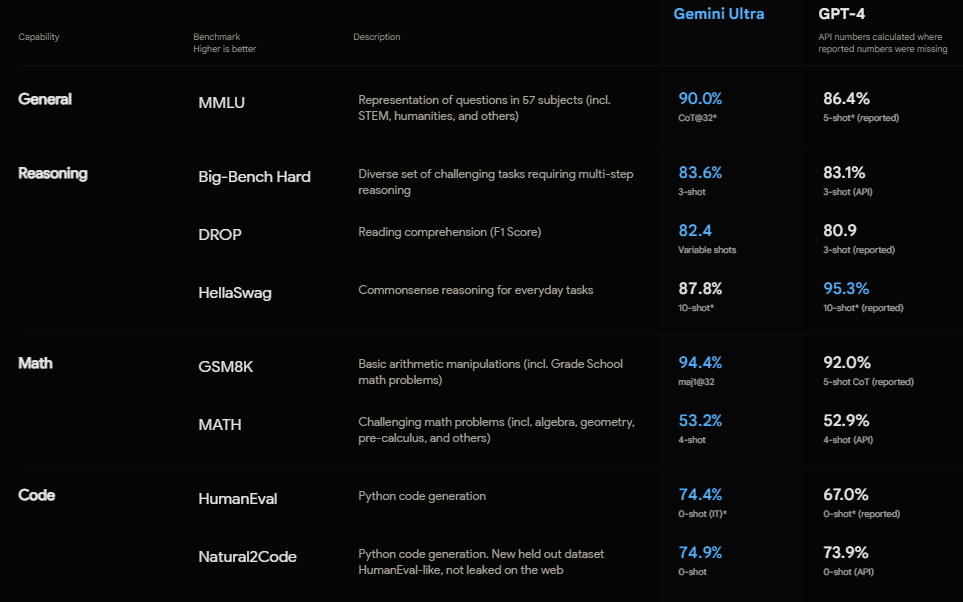

The report cards are in, and Google Gemini officially outperformed GPT-4 (and other language models) in 30 of the 32 academic benchmarks most commonly used to test an AI’s smarts, so to speak.

Previewing Gemini’s multimodal capabilities: What can they do for you?

Besides outperforming other language models in academic benchmarks, reasoning, and understanding – its multimodal capabilities can’t be understated.

Why is that?

It’s because Gemini’s multimodality holds so much potential for how you can interact with and use AI tools at your business.

As an example, let’s say you’re drawing a blank on how to write a description for one of your newest products.

Trying to explain what your product looks like as well as describe its functions in a text prompt would be exhausting and likely ineffective.

With Google Gemini, you can simply upload an image of your product and then ask, “How would you write a product description for this?”

The AI will process your question and then analyze the image provided to understand what you want. From there, it will write an original product description based on what it sees. Next, you can tweak the description by further prompting the AI until it’s picture-perfect.

Gemini can also understand and work with video prompts.

Imagine that you have a popular video on your site that you want to convert into a blog post (without repeating the script verbatim).

All you have to do is upload the video to Gemini and then ask it to summarize the video in its own words.

Presto! You’ve got an original piece of content that covers the same topic as your video, albeit in a different way.

You can repeat the same process for your competitor’s content, too.

For instance, imagine if the video you showed Gemini was from a competing website. In that case, you’d be able to create a similar piece of content without risking plagiarism.

The Different Versions of Google Gemini

Google didn’t create Gemini as a single AI language model. Instead, there are currently three versions of Gemini – with even more on the horizon.

The model we have now, Gemini 1.0 as it’s called, contains three separate versions.

Why did they make so many versions?

The reason for multiple Gemini’s is that each one is customized for specific tasks.

For example, Gemini’s lighter version, Nano, is built specifically for on-device tasks (smartphones, tablets, and other devices powered by Android).

The beefier versions are reserved for powering Google services like Bard and SGE (Search Generative Experience).

Here’s a look at the three distinct versions of Gemini that we know about so far:

- Gemini Nano. The lightest version of Gemini, Nano, was built to run on smartphones like the Google Pixel 8. It’s designed to handle on-device tasks that require efficient AI processing without the need to connect to external servers. That means you’ll be able to perform AI-powered tasks like summarizing text without having to connect to the internet.

- Gemini Pro. This is the most powerful version of Gemini that’s been released thus far, and it’s now powering Bard, Google’s AI chatbot. Pro is able to understand complex queries and features speedy response times (it runs on Google’s data centers). Google claims Gemini Pro is the best version of the model for scaling the AI across a wide range of tasks.

- Gemini Ultra. The penultimate version of Gemini, Ultra, has yet to see a public release. It should be complete after the initial phase of testing with the Pro and Nano models. This is the version of Gemini that outscored other language models on 30 of 32 academic factors. Google designed Ultra to handle extremely complex tasks, such as complicated mathematical calculations and physics equations.

The Future of Generative AI in SEO and Content Creation

How will Google Gemini affect the digital marketing space?

That’s the million-dollar question right now, and it has some excited while others are about ready to head underground.

Google Gemini’s advanced features are like any other tool; it’s up to the user whether it’s used for good or bad.

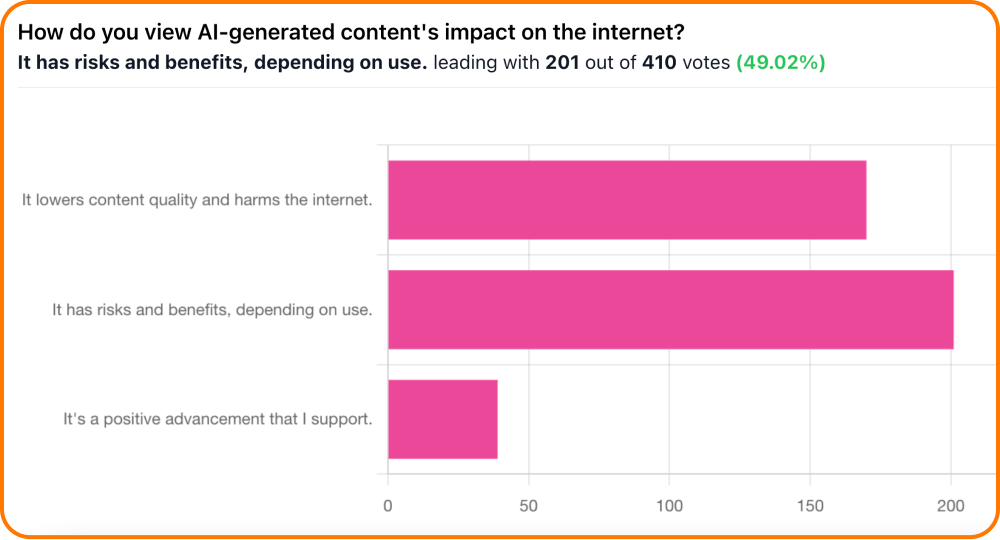

Here’s a look at a poll that asked digital marketers what they think of AI-generated content’s impact on the internet:

AI content has risks and benefits, depending on the use.

It harkens back to the classic white hat/black hat dichotomy that the SEO world has long used.

White-hat SEOs can use Google Gemini to generate high-quality original images and brainstorm ideas for content.

Black-hat SEOs will likely use Gemini-powered tools for nefarious reasons, such as generating spammy content and attempting to find ways to access sensitive information without the user’s permission.

At The HOTH, we deliver the best of both worlds by always using human writers and editors, even for our AI services like AI Content Plus.

To us, AI is an extremely helpful supplemental tool for our team of experienced writers, editors, link builders, and graphic designers.

Much like the classic motto ‘create content for humans first, search engines second,’ we believe in creating content with humans first, AI tools second.

Thriving in the Age of Gemini, SGE, and Generative AI

Google Gemini is officially here, and it’s capable of some mind-blowing things.

At this point, there’s not much use in avoiding AI-powered tools because they’re clearly not going anywhere.

Instead of trying to pretend that AI doesn’t matter, why not put it to work for you by strengthening your existing processes?

SGE will soon see widespread adoption, and it’s only a matter of time until Gemini Ultra is unveiled to the public – so it’s best to get prepared sooner rather than later.

If you need help with your SEO in the age of AI, don’t wait to check out HOTH X, our managed SEO service that includes strategies to adapt and thrive with SGE.

Google’s unveiling of Gemini marks an exciting leap in AI technology, setting the stage for a monumental shift in the landscape of language models. With aspirations to surpass even GPT-4, Gemini embodies innovation, promising unparalleled advancements that could redefine the boundaries of what’s possible in natural language processing. The race to the forefront of AI supremacy has just ignited, and the world eagerly anticipates the unfolding saga of this groundbreaking LLM.”

Impressive

The insights into the evolving landscape of online marketing are spot on. The Hoth consistently delivers valuable content, and this piece is no exception. From decoding Google’s algorithm to practical tips, it’s a must-read for anyone navigating the dynamic digital realm. Great job!

Excellent. I’ve also tested this tool personally, and it delivers outstanding information. AI Revolution is good, but I fear it will eliminate the human work. What are your thoughts on that? Is AI Dangerous for Freelancers and SEO Experts?